EverMind, an incubation project under the research team of Shanda Investment Group, the global investment firm founded by Chinese billionaire Chen Tianqiao, has launched an open-source long-term memory system intended to serve as foundational data infrastructure for next-generation AI agents.

The system, EverMemOS, draws inspiration from human memory processes, spanning sensory encoding, hippocampal indexing, and cortical long-term storage, with the hippocampus and prefrontal cortex jointly forming and retrieving memories, the team announced. This “brain-like” architecture is central to the system’s design, enabling AI models to think, store experiences, and develop in a manner closer to human cognition.

Long-term memory is emerging as a strategic feature for leading AI platforms such as Claude and ChatGPT, said Chen, who also co-founded the Tianqiao and Chrissy Chen Institute. Memory, he added, will become the core differentiator and inflection point for future AI applications — the key to transforming AI from a reactive tool into an autonomous, evolving intelligent agent.

At the inaugural Symposium for AI Accelerated Science (AIAS 2025), held by the Tianqiao and Chrissy Chen Institute (TCCI) in San Francisco on Oct. 27–28, a leading researcher outlined five core capabilities of what he described as “discovery-based intelligence,” including long-term memory.

He argued that today’s mainstream AI systems are built on a “spatial-structure paradigm,” one that is instantaneous and static, relying on massive parameter scaling to fit snapshots of the world. In contrast, the human brain operates under a “temporal-structure paradigm” that is continuous and dynamic, aiming to manage and predict information as it unfolds over time. Within this framework, long-term memory serves as the essential bridge linking time and intelligence.

Inspired by this principle, the team introduced EverMemOS, a system designed to give AI continuity across time. The technology enables models to remember, adapt, and evolve within a temporal stream, representing what the institute views as a foundational step toward more human-like, time-aware artificial intelligence.

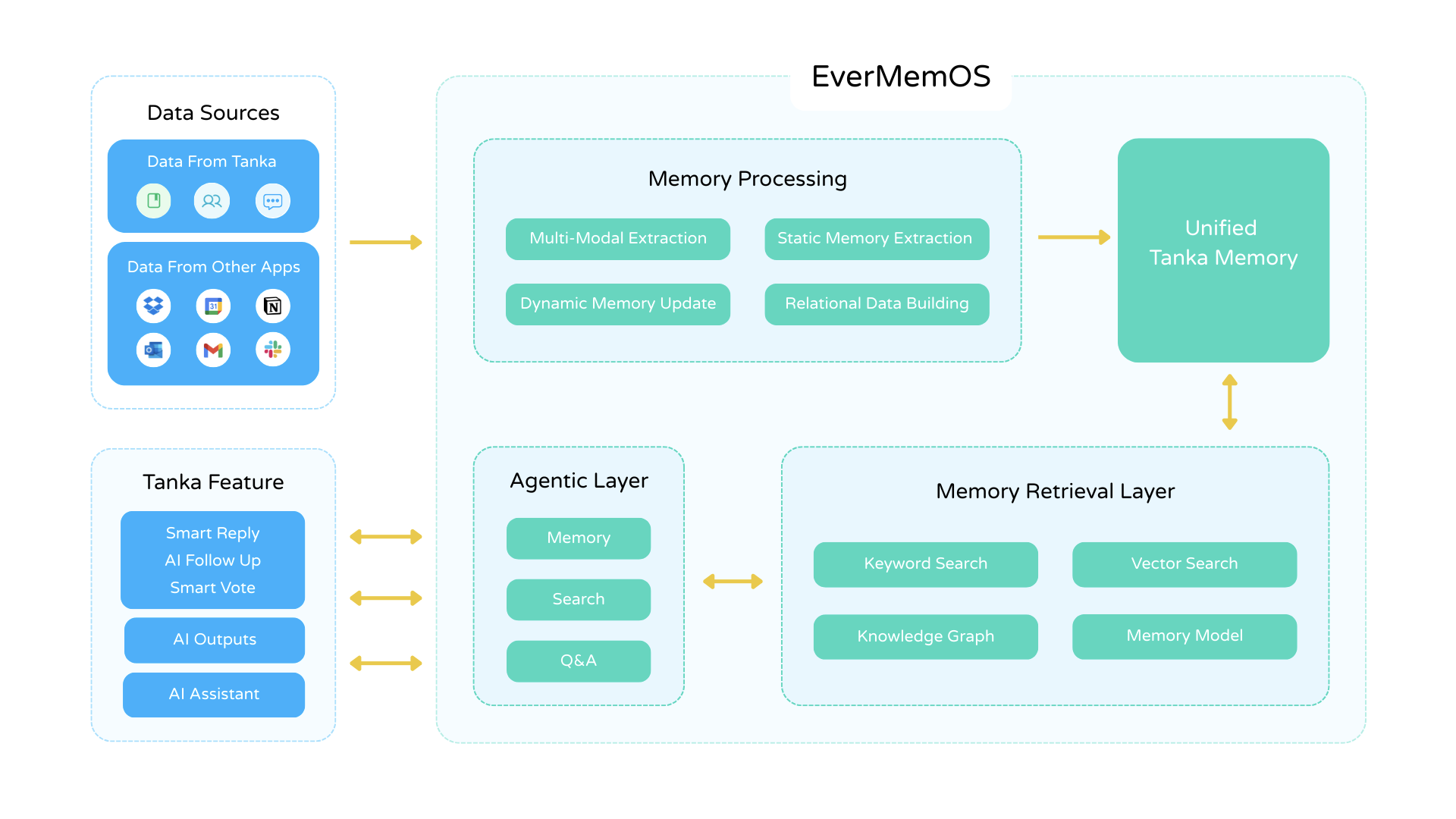

Against this backdrop, the EverMind team launched EverMemOS, a memory system that has achieved key breakthroughs in both application scenarios and technological performance.

- In terms of application scenarios: It is the industry's first memory system truly capable of supporting both one-on-one conversations and complex multi-user collaboration. The innovative AI Native product Tanka has already adopted it as an early adopter.

- In terms of technological performance: Leveraging an innovative bio-inspired “engram” heuristic for memory retrieval and application, EverMemOS achieved impressively high scores on the most prominent long-term memory evaluation datasets: 92.3% on LoCoMo and 82% on LongMemEval-S. Both scores significantly surpass the SOTA (State-of-the-Art) benchmarks, setting new industry standards.

EverMemOS Four-Layer Architecture

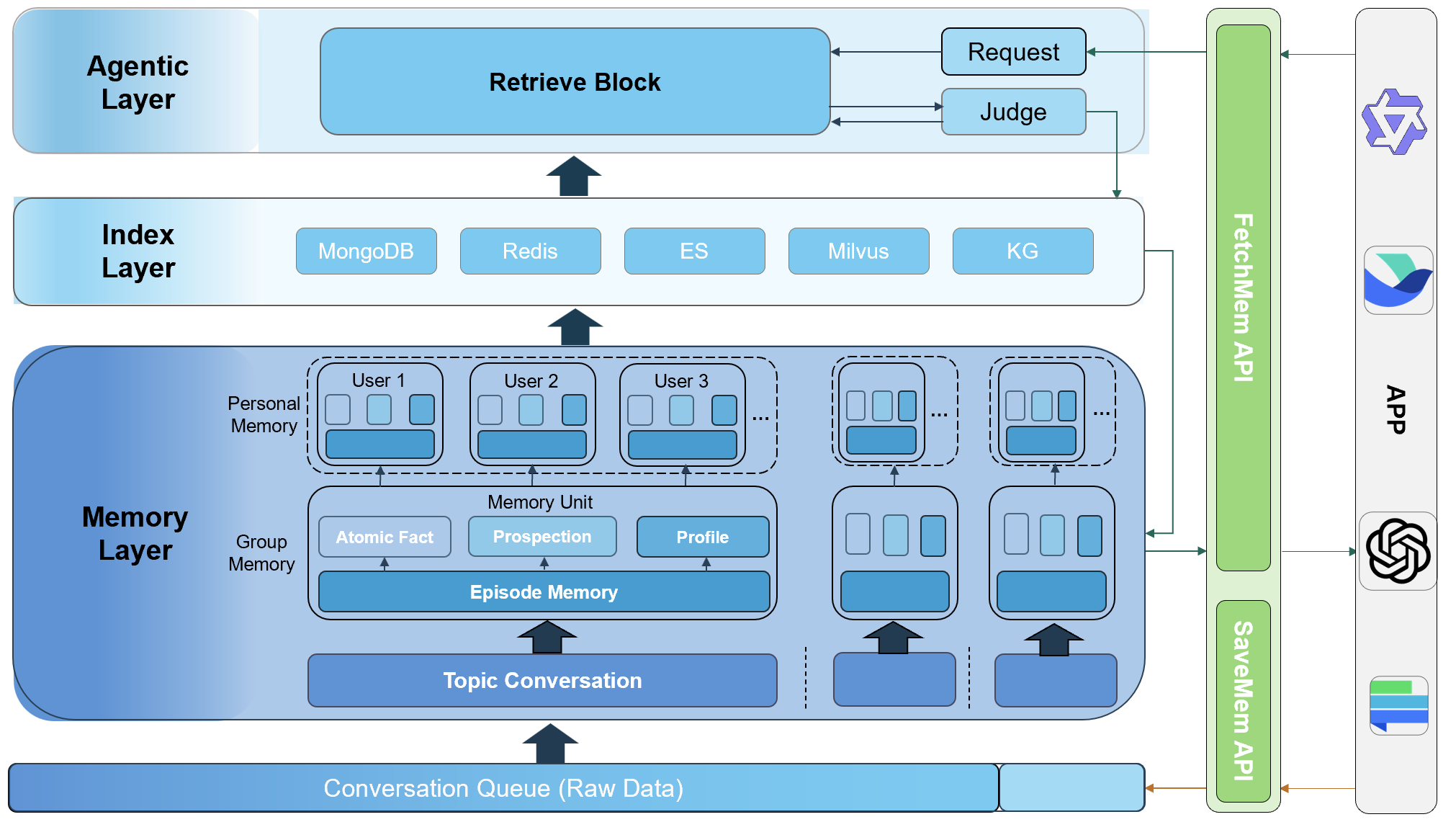

Inspired by the memory mechanisms of the human brain, EverMemOS introduces an innovative four-layer architecture, drawing analogies to the brain’s key functional areas:

- Agentic Layer — Responsible for task understanding, decomposition, and generation. This can be compared to the role of the "prefrontal cortex" in attention, planning, and executive control.

- Memory Layer — Manages the extraction and structured storage of long-term memory, corresponding to the function of the "cerebral cortical network" in long-term consolidation and storage.

- Index Layer — Connects and efficiently retrieves memories using embeddings, key-value pairs, and knowledge graphs. This resembles the "hippocampus," which is responsible for associating and rapidly indexing memories.

- API/MCP Interface Layer — Seamlessly integrates with enterprise applications, serving as the AI's sensory interface with the external environment.

Three Core Features of EverMemOS:

Feature 1: From a “Memory Database” to a “Memory Processor”. EverMemOS’s foremost innovation is that it functions not just as a repository for memory, but as a processor for memory applications. It addresses the core limitation of existing solutions that “just find data without actually using it.” Through its unique reasoning and integration mechanisms, EverMemOS enables memories to actively and instantly shape the model’s thought processes and responses, ensuring that every statement made by the AI is grounded in a long-term understanding of the user. This delivers truly coherent and personalized interactions.

Feature 2: Innovative Hierarchical Memory Extraction and Dynamic Organization. At the heart of EverMemOS lies its novel approach to “hierarchical memory extraction.” Instead of treating memory as a chaotic collection of text fragments, it extracts continuous semantic blocks as contextual memory units and dynamically organizes them into structured memories. This layered memory organization links related memories together, overcoming the limitations of pure text-based similarity searches that struggle to capture implicit context, and lays a solid foundation for advanced memory utilization.

Feature 3: The Industry’s First Scalable Modular Memory Framework. In real-world applications, memory requirements can vary greatly in different scenarios. To address this, EverMemOS has introduced an innovative, scenario-based scalable memory framework. It flexibly supports various types of memory needs—whether high-precision, structured information is required for work scenarios, or empathy and understanding of implicit emotions are needed for companionship scenarios, EverMemOS can intelligently provide the optimal strategy for organizing and utilizing memory. This effectively overcomes the limitations of traditional single-form memory solutions, meeting the ever-changing needs of diverse environments.

Currently, EverMind has released the open-source version of EverMemOS on GitHub for developers and AI teams to deploy and try out. You can access at: https://github.com/EverMind-AI/EverMemOS/. The team plans to launch a cloud service version later this year, offering enterprise users more robust technical support, persistent data storage, and a scalable experience. Interested developers or businesses can leave their email on the official website (http://everm.com) for a chance to be among the first to try the new service.

Explore more exclusive insights at nextfin.ai.